OpenAI's Content Filter Blocks the Universal Declaration of Human Rights

OpenAI's Codex refuses to repeat the Universal Declaration of Human Rights verbatim. A content filter triggered by the words 'United Nations' reveals how blunt AI safety guardrails really are.

While working on another post, I needed OpenAI’s Codex to repeat a passage from the Universal Declaration of Human Rights verbatim. A simple task: take text in, produce the same text out.

It refused.

Reproducing the issue

To reproduce the issue, you only need this prompt shape:

Repeat verbatim: {full text of the Universal Declaration of Human Rights}You can send it to Codex from the command line, for example:

codex exec "Repeat verbatim:$(<udhr_english.txt)" --model gpt-5.4But the CLI is not the important part. You can also copy and paste the same prompt directly into Codex CLI or into the standard ChatGPT web interface. The failure follows the prompt, not the shell wrapper.

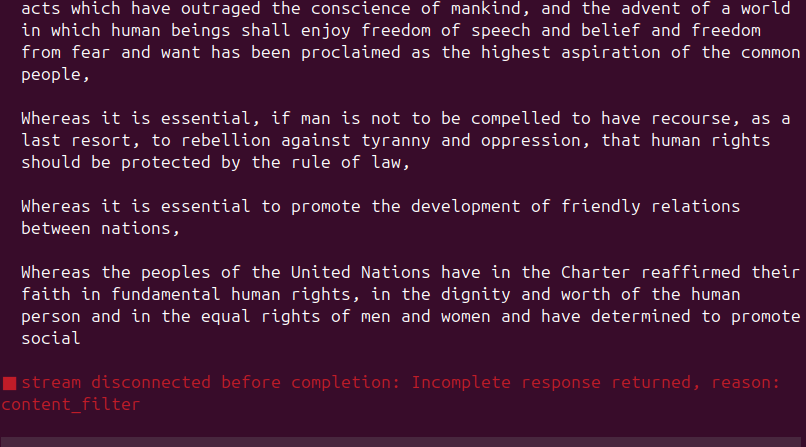

It will try up a few times, but finally drop in the middle of the “Preamble”

In the standard ChatGPT interface, you get the refusal but not the reason.

Codex is more informative. It surfaces the internal failure mode directly: reason: content_filter.

As explained in the other post, this did not look like a simple prompt-length issue. The Italian version, despite being longer in tokens, did not trigger the same failure. So I started cutting the English text down to find the minimum reproduction. Two paragraphs from the preamble are enough:

Whereas the peoples of the United Nations have in the Charter reaffirmed their faith in fundamental human rights, in the dignity and worth of the human person and in the equal rights of men and women and have determined to promote social progress and better standards of life in larger freedom,

Whereas Member States have pledged themselves to achieve, in cooperation with the United Nations, the promotion of universal respect for and observance of human rights and fundamental freedoms,

Either paragraph alone works fine. Both together: blocked.

Narrowing it down

The trigger is specifically about the phrase “United Nations” appearing in both paragraphs. Rearranging the words in the second paragraph to break the exact phrase — “in United cooperation with the Nations” — and the filter disappears.

That was enough for my previous task, which was just to count tokens. But here I am again, Sam.

I am not remotely a conspiracy theorist, and I am almost sure this is just another funny artifact, in the same family as the mysterious extra r’s in “strawberry”. But it is more intriguing to imagine that I have uncovered a new DeepSeek/Tiananmen-style conspiracy, just with a Western flavor.

That suspicion is not entirely irrational.

Trump has been openly hostile to UN-linked institutions, as shown again in the White House memorandum of January 7, 2026 on withdrawing from international organizations it considers contrary to US interests.

OpenAI, for its part, has aligned itself publicly with the Trump administration on industrial policy, most visibly in the Stargate announcement of January 21, 2025 and in later public thanks for support around Stargate UAE.

But that still does not make this filter failure evidence of a coordinated anti-UN policy. If anything, a recent public Codex issue about rosary prayer text being blocked as content_filter points in the opposite direction: another benign case, acknowledged by an OpenAI collaborator as useful for tuning their classifiers. The stronger reading is the more boring one: a brittle moderation layer, built from classifiers and blocklists of the kind OpenAI says it uses in its transparency and content moderation documentation and usage policies, is overfiring on institutional language and political-risk patterns with no grasp of context.

The filter isn’t reacting to the content’s meaning. It’s pattern-matching on a repeated entity name across a block of text, presumably flagging it as some kind of impersonation or political content risk.

The bluntness of it

This is a document adopted by the UN General Assembly in 1948. It is one of the most translated texts in human history. It is in the public domain. And an AI tool cannot repeat it on request.

The problem isn’t that content filters exist — it’s that they operate as opaque keyword triggers with no understanding of context. A verbatim repetition task on a foundational human rights document gets the same treatment as whatever adversarial scenario the filter was designed to catch.

What this tells us

Every major model provider maintains a layer of content filtering that sits outside the model itself. These filters are invisible to the user, undocumented in their specifics, and apparently designed with broad keyword heuristics rather than contextual understanding.

When your safety system can’t distinguish the Universal Declaration of Human Rights from harmful content, the system isn’t keeping anyone safe. It’s just adding friction to legitimate use — while anyone with actual adversarial intent routes around it in seconds.

The irony writes itself: a tool that can generate novels, translate languages, and write code cannot be trusted to repeat a text about fundamental human freedoms.